It was a medical scoop for the Benelux countries. Anaesthetists at the UZ Brussels hospital made use of new artificial intelligence (AI) technology during an operation.

An algorithm, developed by an American supercomputer, analyses all sorts of parameters and predicts a drop in blood pressure, fifteen minutes before the event possibly occurs. The new technology is a telling example of how artificial intelligence can be a priceless asset in healthcare and medicine.

AI might become as significant to the 21st century, as was the Industrial Revolution to the 19th century. It is difficult to give a proper definition of AI, since it concerns a wide range of methods, applications and goals. Key to those is the development of an internal decision-making system, whose logic is almost impossible to translate into human language and reasoning. So AI is all about systems that develop autonomous thought-processes. Those thought-processes are deemed to be more objective and precise, as they are generated autonomously and contain more information than human brains process.

No Science Fiction

The AI-revolution is already underway. New technologies and applications are constantly being developed. Yet most people don’t even have the faintest idea of what it involves. And most politicians don’t have the faintest intention of prioritizing a technology that seems so impalpable.

Many tend to think of AI as something that belongs to the realm of science-fiction. But it’s real and it is happening now. All around us, also in Brussels. The first operations using the new AI-technology in UZ Brussels were successful, and it speaks volumes because everybody involved is enthusiastic about the prospects. When blood pressure fall occurs during an operation, it is no minor inconvenience. It can have long-term consequences and, in particular, have detrimental effects on the brain and the kidneys.

Blind Optimism and Luddism

Yet as always, with great possibilities often come great possible problems. And so it is with AI. One of the main challenges we face is finding the right balance between sufficiently stimulating technological developments that will have clear benefits, and having sufficient regulation in place to offset the risks.

We should not fear future developments, as the Luddites once did. But blind optimism that things will work out fine and regulate themselves is not justified either. We need political action and clear policies. The sooner the better.

Future Wars

A case in point is the application of AI for military purpose or security reasons. Weaponised AI will play a key role in how future wars are fought, and how peace can be maintained. But it raises major issues about where the responsibility in the decision-making process lies, and who is to be held accountable when drones launch a fatal attack that goes wrong.

In matters of security and surveillance, AI offers unique opportunities to foster peace and stability, to detect possible dangers even before they arise, to prevent terror attacks and criminal acts long before they are committed. But at what cost does such security come? How much monitoring can be permitted? Where is the tipping point, when maintaining free movement and expression in the public sphere and pursuing security and public safety have simply become impossible?

These are incredibly complex questions, for which neither the engineer, the philosopher nor the politician knows the answer. What we do know is that we need engineers, philosophers and politicians to think collaboratively about the matter. That is also why best-selling author Yuhal Noah Harari calls for the political involvement of scientists and philosophers. The challenges of the 21th century are too complex and too important to leave it all up to the politicians.

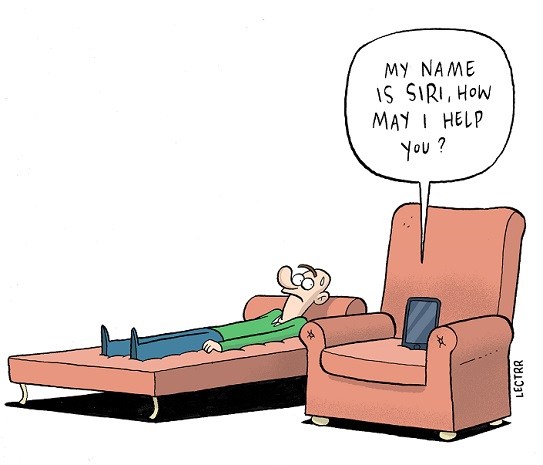

AI and Psychological Well-being

One of the fields in which much is expected of AI is health care. It is quite conceivable that in a few decades from now, our doctors will be the assistants of algorithms, rather than the other way round: algorithms assisting doctors in making a diagnosis. In an article published in The Atlantic a few months ago, journalist Judith Shulevitz elaborated on the possibility of assistant-bots being the therapists of the future. Talking to a chatbot about our psychological troubles allows us “to reveal shameful feelings without feeling shameful.”

Human Kindness

However, one of the main reasons people go to a psychotherapist is something no ‘Woebot’ can remedy, namely the lack of social contact and human interaction. It is something many psychotherapists confirm: only a minority of the people who come and seek their help, really need medical treatment for their psychological problems. Many others need someone.

So whatever the future brings, it is important to bear this elementary fact of human nature in mind. It is something not only philosophers should reflect and talk about, but something on which everyone should act upon: what most people first need for their psychological well-being is not artificial intelligence, but human kindness.

By Alicja Gescinska