The artificial intelligence (AI) text-generating web service ChatGPT which has wowed the world with its convincing eloquence has been accused of shady practices in order to make its AI act more moral.

An investigation published by Time magazine on Wednesday revealed that it used Kenyan workers, paid less than $2 per hour, to sift through thousands of pages of heinous content to help reprogram the AI’s moral compass.

Sexual abuse, hate speech, and violence – Kenyan workers employed by social network partner Sama were paid pennies on the dollar to sift through traumatising content on behalf of ChatGPT owner OpenAi, which Microsoft is poised to invest a whopping $10 billion into.

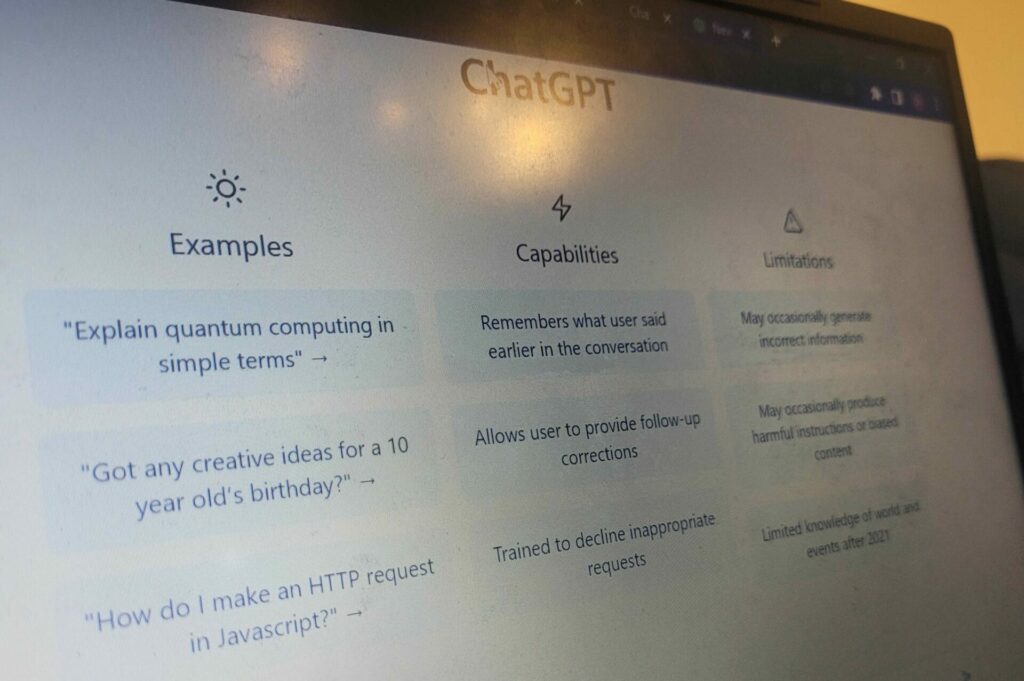

For users of the AI, the possibilities are endless. An ode to the Grand Place written in the style of a vaudeville play? No problem. A short forbidden love novella about Bart De Wever and Georges-Louis Bouchez? Coming right up.

Not all fun and wordplay

As users quickly found, the AI could handle most requests, but would also occasionally accept more controversial or even grisly requests. OpenAI were understandably eager to sanitise the AI’s imagination as quickly as possible, especially given its popularity. Within one week, the AI chatbot had more than a million users.

Other attempts to make smart AI tools have revealed just how susceptible AI is to corruption. In 2016, online trolls and racists on Twitter fed Microsoft’s AI chatbot “Tay'' so much hate speech that the bot soon learned to advocate for misogyny, dictator worship, and antisemitism.

This happened in the space of just a few days and was a significant embarrassment for Microsoft, a lesson which ChatAI hopes not to repeat. ChatGPT’s predecessor GPT-3 encountered similar problems to Tay, blurting out racist content due to the fact that its dataset was indiscriminately scraped from the internet.

Unethical moderation

As such, ChatAI has found the need to take a proactive stance on moderation. Ironically, it chose the least moral way to do so. Taking a leaf out of the playbook of Facebook, OpenAI outsourced its content moderation to help build an AI which would remove hate speech from its platform. The AI would learn to identify hateful speech and formed an important element of the AI that netizens use today.

Starting in November 2021, OpenAI sent thousands of snippets of text to Sama containing graphic descriptions of child sexual abuse, bestiality, murder, suicide, torture, self-harm, and incest. Kenyan company Sama describes itself as an “ethical AI” company and claims to have raised 50,000 people out of poverty.

Data labellers employed by the developing African country on behalf of OpenAI were paid roughly $1.32-$2.00 per hour depending on their performance, according to internal documents seen by TIME.

One anonymous Sama worker described his work on the ChatGPT project as “torture”, noting that he is still haunted by graphic descriptions of a man having sex with a dog in the presence of a young child.

“You will read a number of statements like that all through the week. By the time it gets to Friday, you are disturbed and thinking through that picture,” the employee said. The trauma inflicted on the Kenyan company’s workers was so severe that Sama cancelled all its work with OpenAI in February 2022, eight months earlier than planned.

Underpaid and traumatised

Internal documents seen by TIME suggest that OpenAI paid an hourly rate of $12.50 to Sama for the work, which was ultimately six to nine times more than the amount the company’s employees received for the work. Employees made 21,000 Kenyan shillings per month ($170), plus a $70 bonus for the traumatic nature of their work.

According to employees, workers would be asked to label up to 250 text passages every nine-hour shift, a colossal workload if they wished to reach the higher ends of their pay scale. Workers also said that mental health support was insufficient and counselling was rare due to the high workload.

In February, OpenAI deepened its cooperation with Sama, asking the company to collect thousands of violent and sexual images for the company, some illegal under US law. Sama sent 1,400 images to OpenAI, some classified C4 (containing child sexual abuse). Others contained images of bestiality, rape, and sexual slavery.

For the collection of these images and the traumatic labour of its employees, OpenAi paid Sama $787.50. For the workers, this appeared to be the last straw as just weeks later, Sama cancelled all its work for the company. Sama says its original contract with OpenAI did not include provisions for illegal content, leading to the termination of future contracts. Many Sama workers then lost their jobs.

The hidden cost of AI

This story is part of a greater untold story behind the sweatshops used in developing AI. As investors funnel billions into new AI software, the bottom end of the data collection process is often neglected in favour of profit.

“Despite the foundational role played by these data enrichment professionals, a growing body of research reveals the precarious working conditions these works face,” the Partnership on AI, a collective of AI organisations, told TIME.

Social media giant Facebook has also been accused of unethical data outsourcing in the past. On 14 February 2022, TIME published a story about Sama employees moderating content on behalf of Facebook. Again, African employees were forced to view images of executions, rape, and child abuse for as little as $1.50 per hour.

Related News

- Belgium upholds decision to ban 'killer robots'

- AI hub to boost economic and environmental innovation opens in Brussels

- Russian robot-cosmonaut goes rogue, insults officials on Twitter

Companies are now rushing to hide their involvement with Sama and contracts are been scrapped between the African company and major multinational companies. Sama has now cancelled all sensitive moderation, missing out on $3.9 million in contracts with Facebook and cutting 200 jobs in the Kenyan capital of Nairobi.

Clearly, behind the rapidly accelerating pace of AI development, there is a discernible human cost.

“They’re impressive, but ChatGPT and other generative models are not magic – they rely on massive supply chains of human labour and scraped data, much of which is unattributed and used without consent,” said Andrew Strait, an AI ethicist, on Twitter. “These are serious, foundational problems that I do not see OpenAI addressing.”